Think about every time you share information about yourself online – you sign up for a product, fill out a form, and knowingly share information about yourself. It could be one, two, or ten times a day – likely hundreds of times at least in a given year.

What is the assumption underlying all those instances of information sharing?

Is it… that you’ll get some value from the product you’re signing up for in return? Sure, typically – but the Internet is filled with useless stuff, so you might just be signing up for an app that says “yo.”

Is it… that the information you provide will be jealously guarded, never to be leaked? Hahaha – of course not. Data breaches happen every. Single. Day.

Is it that the data you share is used for a specific purpose for which you are granting permission? YES!

Even while the cynic in every one of us knows that product will likely be using the information for reasons other than what is explicitly stated (more on that later), the base assumption for sharing information online is that, yes, we are sharing for a specific purpose.

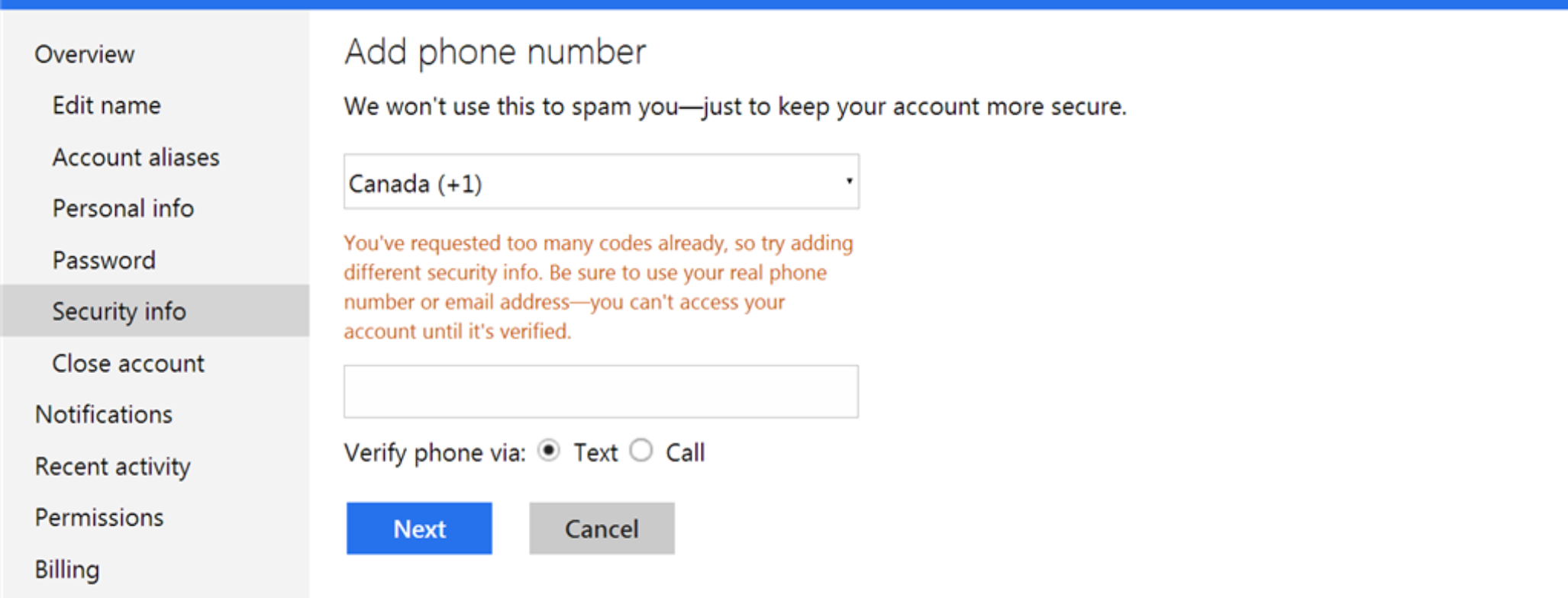

Here’s a screenshot from a major technology company whose products you probably use regularly:

“We won’t use this to spam you – just to keep your account more secure.”

Here, a big tech company wants you to take a specific action – add your phone number for security so that their entire platform is more secure. They tell you that the purpose is limited to get you to do it. This is the big bad secret across data sharing online: everyone recognizes that purpose and permission are essential, even if they don’t always respect purpose!

Its instances like the above – when sharing purpose will get product users to share information they might otherwise not – that the curtain is lifted a bit outside the paragraphs of legalese in your typical T&C document. It doesn’t seem ethical if you stop and think about it, does it?

It’s not about “privacy” as defined as the complete and total, Walden-esque shutting off from society in a cabin in the woods. Almost everyone is willing to share their information in exchange for some value, like access to a free social networking platform. It’s about privacy in that users – the real “Data owners” – are owed, in ethical terms, the transparency for how their data will be used and the choice to grant permission in response.

So, how do we engineers bring permission and purpose out of the shadows and into the light to make a more ethical Internet that will work better for data owners AKA us humans that use the internet?

The First Principles for Ethical Data

OK, there’s a problem we want to solve – we want digital products that are ethical and respect privacy by respecting purpose and permission. Still, somewhat abstract right? So we started by asking the key questions to define the requirements for those products to understand what the first principles for privacy genuinely are.

We came up with 5:

- Purpose – naturally, it starts here by making purpose a “first-class citizen” by declaring, enforcing, and auditing permissions.

- Control – afford data owners the means to control their data through the granting, revoking, and enforcing of permissions; essentially, the ability to execute data control operations.

- Recognition – make explicit the recognition and identification of all parties participating in a data transaction, even if unknown to the data owner; associated registration, verification, and revocation procedures are required.

- Transmission – support transmission of permissions from end-to-end, with subscription and broadcasting features across the chain and auditing and enforcement procedures within each link.

- Rectification – Remedial steps are necessary to rectify instances when permissions or instructions are not respected; monitoring and alerting for such instances is required.

It Starts by Empowering Ethical Engineers

Enough philosophizing. It’s time for action. All these requirements are great, but they’re a bit like a product manager dumping a bunch of features on you without having to be hands-on-keyboard themselves. So how do we make it so?

Our audience here is clear: the best way we can impact change is through technologists – the architects, designers, and maintainers of data systems.

Our goal is then to empower technologists to architect, design, build and maintain systems that respect privacy by design and process data ethically. The solution we need has to be:

- Flexible – the right solution is the one that can be implemented once and withstand the changing regulatory compliance landscape.

- Value-Add – By articulating the tradeoffs, a solution supports both securing the data and extracting value from data.

- Complete – With the proper controls in place, every stakeholder can act independently to orchestrate an organization’s posture toward privacy compliance.

The Reference Architecture

Through this process, we landed on a reference architecture for a Privacy Stack as a great first step toward a flexible, complete, and value-add way for installing privacy by design in products everywhere.

What is a Reference Architecture?

- A common vocabulary

- Reusable designs

- Industry best practices

- Focused on concrete architectures

Note a reference architecture is not a solution architecture – it is not directly implementable.

What does a Reference Architecture Include?

That depends on what is being referenced! For the Privacy Stack, we include the following:

- Four data stack architectures:

- Simple

- Advanced

- Processor

- Ecosystem

- Components and Component Design

- Permission Collection

- Data Subject Rights fulfillment

- Purpose limitation data access and processing

- Integrating advanced statistical anonymization

- Permission propagation

- Interfaces and APIs

What’s Next?

ThePrivacyStack.org is the official launch of V1 of the Privacy Stack Reference Architecture!

Our vision is for an open-source, transparent, and collaborative community where technologists are free to adopt the Privacy Stack and propose changes. Our community efforts are centered on GitHub – we want to hear from you!

- Check out the Privacy Stack on GitHub

- Sign-up for intermittent updates from our team

- Questions? Comments? Want to get further involved? Reach out to contact@theprivacystack.org